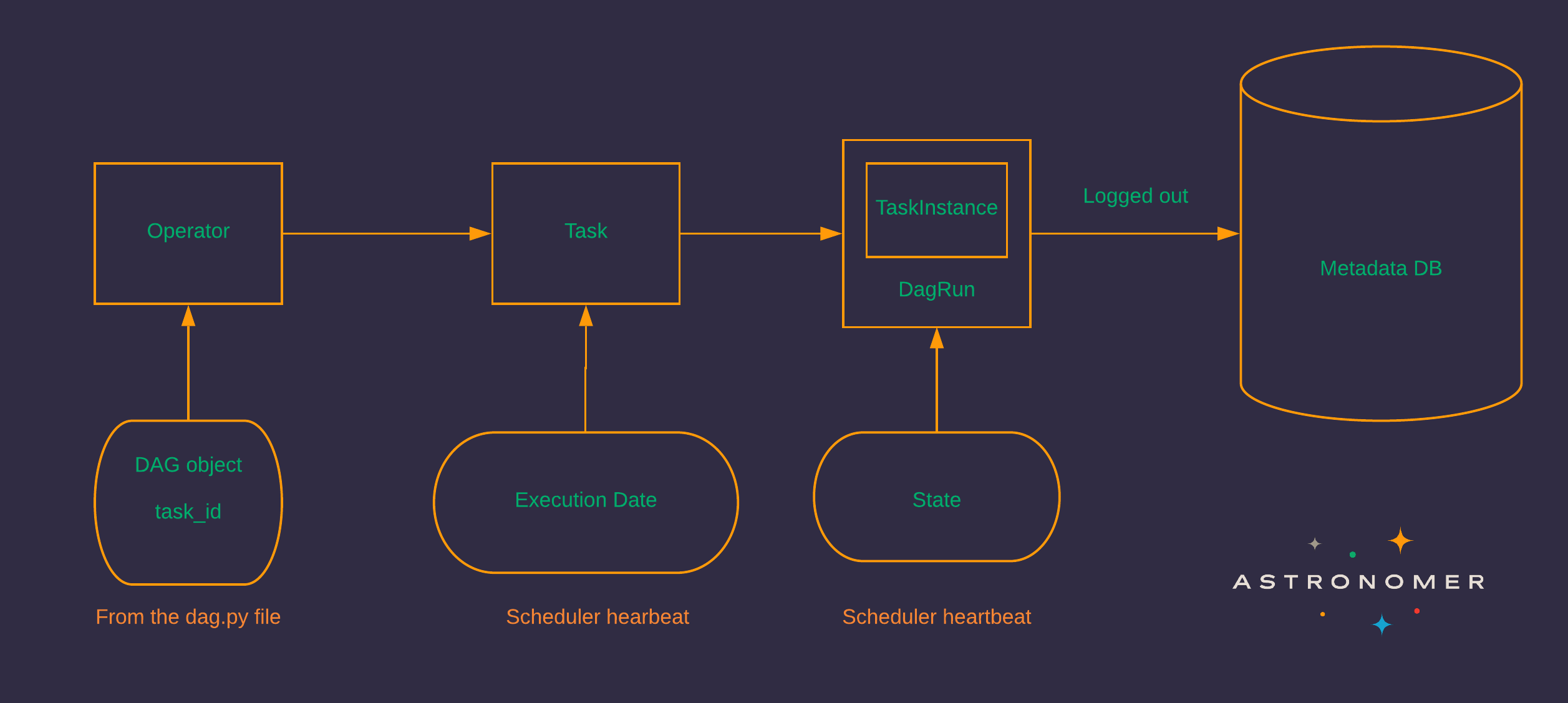

The following diagram illustrates a complex use case that can be accomplished with Airflow. Because of its extensibility, Airflow is particularly powerful for orchestrating jobs with complex dependencies in multiple external systems. Active and engaged OSS community: With millions of users and thousands of contributors, Airflow is here to stay and grow.Īirflow can be used for almost any batch data pipeline, and there are a significant number of documented use cases in the community.Ease of use: With the Astro CLI, you can run a local Airflow environment with only three bash commands.Stable REST API: The Airflow REST API allows Airflow to interact with RESTful web services.Visualization: The Airflow UI provides an immediate overview of your data pipelines.Infinite scalability: Given enough computing power, you can orchestrate as many processes as you need, no matter the complexity of your pipelines.High extensibility: For many commonly used data engineering tools, integrations exist in the form of provider packages, which are routinely extended and updated.Tool agnosticism: Airflow can connect to any application in your data ecosystem that allows connections through an API.CI/CD for data pipelines: With all the logic of your workflows defined in Python, it is possible to implement CI/CD processes for your data pipelines.Anything you can do in Python, you can do in Airflow. Dynamic data pipelines: In Airflow, pipelines are defined as Python code.With orchestration, actions in your data pipeline become aware of each other and your data team has a central location to monitor, edit, and troubleshoot their workflows.Īirflow provides many benefits, including: It is especially useful for creating and orchestrating complex data pipelines.ĭata orchestration sits at the heart of any modern data stack and provides elaborate automation of data pipelines. Why use Airflow Īpache Airflow is a platform for programmatically authoring, scheduling, and monitoring workflows. Airflow is used by thousands of data engineering teams around the world and adoption continues to accelerate as the community grows stronger. On December 17th 2020, Airflow 2.0 was released, bringing with it major upgrades and powerful new features. As of August 2022 Airflow has over 2,000 contributors, 16,900 commits and 26,900 stars on GitHub. The project joined the official Apache Foundation Incubator in April of 2016, and graduated as a top-level project in January 2019. To satisfy the need for a robust scheduling tool, Maxime Beauchemin created Airflow to allow Airbnb to quickly author, iterate, and monitor batch data pipelines.Īirflow has come a long way since Maxime's first commit. Airbnb data engineers, data scientists, and analysts had to regularly write scheduled batch jobs to automate processes. In 2015, Airbnb was growing rapidly and struggling to manage the vast quantities of internal data it generated every day.

See the Python Documentation.Īirflow started as an open source project at Airbnb. To get the most out of this guide, you should have an understanding of: You'll learn:įor a hands-on introduction to Airflow using the Astro CLI, see Get started with Apache Airflow. This guide offers an introduction to Apache Airflow and its core concepts. Airflow allows data practitioners to define their data pipelines as Python code in a highly extensible and infinitely scalable way. It has over 9 million downloads per month and an active OSS community. Apache Airflow is an open source tool for programmatically authoring, scheduling, and monitoring data pipelines.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed